We are grateful to the following organizations whose support sustains our research.

ACTIVE RESEARCH

Our ongoing research is broadly in the areas of deep learning, computer vision and non-convex optimization, with applications in e-learning, security, disaster management and agriculture. If you are interested, please contact us for further information.

We are grateful to the following organizations whose support sustains our research.

Some of the past projects I have been involved in are listed below:

We are grateful to the following organizations whose support sustains our research.

PAST PROJECTS

- Social Interaction Assistant for

Individuals who are Blind or Visually Impaired

Provide a user who is blind with information that can improve their social interactions with sighted counterparts. Current research includes user-specific body gesture recognition using RGB-D data, multimodal emotion recognition, design of processing-delivery interfaces (such as Emotion Markup Language), and user studies for understanding non-verbal communication involving individuals with visual impairments. Please see this link on CUbiC website for more details.

Collaborators: Sethuraman Panchanathan, Sreekar Krishna, Troy McDaniel (CUbiC, ASU), Terri Hedgpeth (Disability Resource Center, ASU), Artemio Ramirez (Dept of Communication, University of South Florida)

- Alliance for

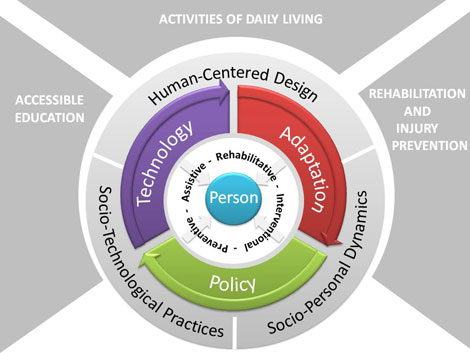

Person-centered Accessible Technologies (APAcT)

NSF IGERT program on the design and development of person-technologies and practices for individuals with disabilities. The interdisciplinary program includes students from Computer Science & Engg, Bioengineering, Science/Special Education, and Human Dimensions of Science & Technology (Science Policy). Please see the APAcT IGERT website for more details.

Collaborators (Selected): Sethuraman Panchanathan (CUbiC, ASU), Jay Klein (School of Social Work, ASU), Forouzan Golshani (California State University Long Beach), Mohammad Forouzesh (California State University, Long Beach)

- Socio-Assistive Technologies for Children with Autism

Develop cyber-physical systems using wearable and environment-based sensing technologies to understand social behavior of children with autism, as well as provide means for early interventions. Current research includes the design of biosensing wearables to measure physiological signals on toddlers with/at-risk for autism, and the recognition of emotional states from multimodal signals. Please see this link on CUbiC website for more details.

Collaborators: Jeanne Wilcox (Infant Child Research Programs, ASU), Daniel Openden (Southwest Autism Resource and Research Center), Troy McDaniel, Sethuraman Panchanathan (CUbiC, ASU), Galina Mihaleva (School of Dance, ASU)

- Training Radiologists through Eye Tracking

Develop methods to train new radiologists by learning regions of interest in radiological images (chest X-rays, in particular) using eye gaze patterns obtained using eye-tracking technology of experts. Role included working with a graduate student on developing computational methods for analysis of eye gaze patterns.

Collaborators: John Black, Mohammad Alzubaidi (CUbiC, ASU)

- Multimodal Biometrics (Face and Speech) for Robust Person Recognition

Design and develop a robust multimodal person recognition system using face and speech modalities, which are non-intrusive and can provide at-a-distance solutions for assistive, security and surveillance applications. Role included development of reliable confidence estimation methods for multimodal decision support contexts. Please see this link on CUbiC website for more details.

Collaborators: Juan-Arturo Nolazco, Paola Leibny Garcia, Roberto Aceves (Tecnologico de Monterey, Mexico)

- Risk Prediction for Cardiac Patients following a Stent Procedure

Develop computational models for prediction of risk in patients who have undergone a Drug Eluting Stents procedure, using data from a high-volume cardiology practice catering to patients across Arizona. Role included development of models for reliable confidence estimation in cardiac decision support, as well as working with a graduate student in Biomedical Informatics on kernel-based methods for risk prediction. Please see this link on CUbiC website for more details.

Collaborators: Ambika Bhaskaran, Jennifer Vermillion, Jenni Harris (Advanced Cardiac Specialists, Arizona)

- Ambient Interactive Shopping Environment for the Blind

Enable individuals with visual impairments to shop independently with the use of wearable technologies. Please see this link on CUbiC website for more details.

Collaborators: Terri Hedgpeth, Sreekar Krishna, Narayanan Krishnan (CUbiC, ASU)

- Dimensionality Reduction Toolbox

A stand-alone GUI-based application that combines the steps required to import data, manipulate the data via reduction techniques, and view the resulting output. The D.R. Toolbox accepts input from CSV files and images; reduces the data by using any of the techniques that were present in the Matlab version of the toolbox; and displays the reduction results in either dataset or 2D graph views. This is an open-source project, and is available at Google code.

- Movement Analysis in Surgical Tasks

Kinematic analysis of surgical skill gestures using human anatomy-driven Hidden Markov Models (HMMs). In this work, we proposed a novel system that combines surgical gesture segmentation, surgical gesture recognition, and expertise analysis of surgical profiles in minimally invasive surgery (MIS).

Collaborators: Kanav Kahol, Narayanan Krishnan (CUbiC, ASU), Mark Ferrara, John Smith (Banner Good Samaritan Hospital, Arizona)

- Video Mosaicing in MPEG Domain

A unified system for temporal segmentation and motion estimation in the MPEG (1&2) domain. Camera motion parameters were computed for each shot using the MPEG motion vectors; frames from a shot are then aligned and integrated into a static mosaic for each shot The proposed system was found to be about 200% faster in executing the registration step for our input video sequences when compared to techniques in the uncompressed domain. This was a project from Defense Research and Development Organization, India.